Surveys and Asking Questions

Surveys or questionnaires are probably the most used method of evaluation. They are flexible, quick to create, cheap to deliver, can be paper-based, digital or both, and a careful combination of questions can give you useful qualitative and quantitative evidence as well as demographic data about the audience. Surveys can provide evaluation data for a large exhibition, one case or a single object. A survey is often completed without input or intervention from an evaluator and so are limited to a predetermined set of questions.

How to do it

- Write your questions.

For demographic questions, check what is standard in your field or nationally recognised. For example, you might want to align your age categories with those used by Arts Council England or use the guidance from Stonewall on sexual orientation and gender identity. Only ask for demographic information that is relevant and that you can use in your analysis. Don’t chose categories that are too big, this often happens in age categories, for example try to avoid using catch all ages such as ‘65+’.

Use a variety of questions: open-ended, closed, free text, rating scales, etc. See the Ben Gammon document in the Further Reading section for a helpful guide to different sorts of questions. There is disagreement in the literature over whether you should have scale questions with an odd or even number of points. A scale with an odd number (1 to 5 for example) gives respondents the opportunity to give a neutral response by putting themselves in the middle, a scale with even numbers (such as 1 to 10) forces people to have an opinion one way or the other, however marginally. All closed and scale questions should include an option for don’t know, no opinion, not applicable, or prefer not to say (particularly if you are asking for potentially sensitive information).

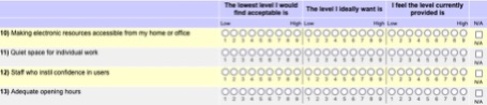

Figure 1: Example survey questions with odd number scale bars. It is not immediately obvious how to respond to each question: how many people will complete all 12 parts?

Don’t ask more than one thing in each question. It is very easy to add clauses to questions that make them difficult to answer, or difficult to analyse. In Figure 1 the first question asks about accessing electronic resources, but the answer might be different for the home or the office. To avoid confusing the respondent and the final report, these should be two separate questions. Don’t have too many questions: the more questions you ask, the more likely the participant is to leave the survey. Use questions with free text responses cautiously. People will quickly get tired of writing longer answers and you will not get better data with a greater number of questions. It should be possible to answer your question almost immediately. Try not to ask people for information that they do not have at the time or that they will need to gather from elsewhere. Don’t ask people to respond on behalf of someone else, for example don’t ask teachers to tell you what the students thought.

- Only ask questions that are relevant to your evaluation study.

This is especially important with demographic questions, which can appear invasive. Think carefully about how you will analyse and use the results of each question in your survey: you should have a clear reason to include it. Remember to ask some questions that allow for negative feedback.

- Balance the positive and negative questions.

Getting negative feedback from your evaluation participants can sometimes be difficult. In UCM, we have found that asking people to suggest three improvements to an exhibition, or to be specific in their criticism has given us the best results. If you have a series of questions where you are asking people to rate on a scale, you could consider whether some of these are positive, and some are negative. Sometimes respondents can end up giving the same response to each question without thinking, so switching the way you ask questions might help them slow down. Pilot your survey to make sure the questions are clear. See the UCL Museum Wellbeing Measures Toolkit (in Further Reading) for their ‘Umbrellas’ that use both positive and negative terms for the participant to rate on a scale of 1 to 5.

- Order your questions.

Put your questions in a sensible order: group them so that you take the person on a logical journey through the questionnaire. Some experts recommend putting demographic questions at the beginning of the survey, to ease people in gently. Others suggest you end with demographic questions, so that if people are tired, they have something easy and quick to end on. You could order the questions in the order of your event or exhibition if appropriate. In general, it is recommended that a survey moves from general questions to more specific ones, or from questions that are easy to answer at the beginning, with ones that require more thought or are more emotionally charged towards the end. Think about who the survey audience is and order your questionnaire appropriately.

- Check the design and logic.

If your survey is on paper it should be easy to read with numbered questions. Follow all the usual rules for creating an accessible print document: don’t have sentences or questions that break over a page, don’t hyphenate words over different lines, align text on the left text rather than centred or justified. Most digital survey software will allow you to add logic, so questions can be skipped if particular answers are given. Used carefully, this can help to avoid tiring your respondent by only giving them relevant questions, but don’t use it as a way to have a long survey.

- Pilot the whole survey and/or individual questions.

Testing out your questions with a small sample of your intended audience is a crucial (but often overlooked) step. The more you test and refine your questions, the better your results will be and the more robust your data set. Piloting allows you to iron out ambiguities in wording, to check the survey logic and to avoid misunderstandings. Most of the time you will have no opportunity to ask follow-up questions as part of the survey process, so checking your questionnaire thoroughly increases your chances of getting reliable results. Piloting your survey should also be a chance for you to check that respondents understand multiple-choice answers in the same way, and that everyone fits into a category. It can be helpful to time people as they complete the survey. This will help you to approach your audience in the data collection phase, as you can genuinely tell them how long it will take.

- Collect the data.

Unless the audience you are evaluating is very small, you will need to survey a sample of the population. Work out how you will be sampling the audience and ensure you are not excluding anyone. You don’t want to end up relying only on motivated people to fill your survey in. This will give you plenty of people who either loved or hated the experience you are evaluating, but not many of the people in the middle. Think carefully before offering incentives: you don’t want to create a bias in the respondents your survey appeals to, and you shouldn’t offer anything related to your venue.

You need to directly ask the audience you are evaluating. Don’t ask people to speak on behalf of someone else, for example one person to do the survey to represent the group they visit with. If you are approaching people in person to fill in the survey, think about where you will be positioned, how you will present yourself and what you will say. Write a script so that all evaluators are using the same prompts.

Ethics, safety, and security

All relevant museum and evaluation staff should be fully briefed on the evaluation project before it begins, especially if you are using a sampling strategy. There will probably be no reason for you to collect identifiable data as part of a survey, but your questions should maintain respondents’ anonymity. See the GDPR template on the Practical Evaluation Tips website (in Further Reading) for an idea of how to collect the necessary permissions.

Anyone evaluating visitors should be ready to explain what they are doing and why. For a survey, the person asking the questions should know what is going to happen to the results and how quickly a report might be available. Information sheets for participants should be provided either physically, or as part of an online survey. If someone responding to the survey decides they would like to withdraw from the study, delete or destroy their survey. Online surveys should have the details of someone who can be contacted for any queries and should clarify when in the process participants can withdraw their participation. Decide in advance how you will handle any surveys which are not fully completed: will you use the responses that have been given or remove the survey if the participant does not reach the end?

What to do with the data

If you have collected the data on paper, this will need to be transcribed into a spreadsheet. You can then create simple charts or graphs. Digital survey software will be able to do a lot of the analysis for you, usually creating charts or graphs automatically.

You will need to analyse free text questions separately. Immerse yourself in the answers by reading the responses to each question through several times before starting to draw conclusions. Look for repeating themes, words or emotions, or patterns in responses. Think about ways that you can back up the themes that you come up with from a questionnaire with other evaluation methods.

Spend some time working out the format for your final report. How can you present the data in the most effective way possible? Remember your report has an audience, so communicate your conclusions in a way that is understandable and persuasive. This could be through different types of graphs, images, using colour or infographics.

Figure 2: A very simple infographic from the UCM blog, summarising UCM visitor figures April to September 2022

Cautions and caveats

A questionnaire is not a magic bullet for all your evaluation needs. They rely on people having good skills in the language the survey is written in. Depending on the distribution method, they can have a very low return rate, leading you to draw conclusions based on a tiny proportion of the audience. They are often the first method people turn to when evaluating, but your audience might be experiencing a level of ‘survey fatigue’. Use questionnaires with care.

Surveys should include a mixture of questions to give you both quantitative and qualitative responses. Your final results should be standardised and comparable, within the population you are studying or before and after an experience. You should ensure you include enough free text questions so that you can dig into responses, but not so many that it is overwhelming. If people are filling in the survey without an evaluator, you may end up with incomplete surveys and you have no opportunity for follow up.

You can end up with a lot of data. This can be good but can also leave you with a lot of analysis to do (and transcription if you are collecting questionnaires on paper). Be realistic about what you will have time to do and don’t ask more questions than you will have time to go through. Don’t ask questions that are unnecessary. Before you ask people to complete your survey, check that each question has earned its place in your questionnaire. Will the response be helpful? Are you inviting people to complain or comment on things that you have no control over? Is that important?

Avoid leading questions. Don’t assume that everyone is as excited about the specific area you evaluating as you are. There can be a tendency to really drill down into responses in areas that people might have no knowledge or opinion. A questionnaire should not feel like a test, and you don’t always need to ask ‘why?’.

When writing your report, avoid putting together too many groups of responses for questions with a scale. For example, it is better to avoid putting together all the ‘satisfied’ and ‘very satisfied’ answers into one general positive category. If your scale only has five points, there could be quite some difference between these two answers.

Further reading and other resources

- Ben Gammon, Effective Questionnaires for All: A Step by Step Recipe for Successful Questionnaires, Science Museum, 2001.

While not an academic article, this is a short, practical guide to the process of writing a questionnaire that will get you results. Parts of the document are showing their age (it is unlikely you will be sending out postal questionnaires, for example, and the further reading list is very short), but it is an easy read from an author with years of experience of successful survey design. This document is an excellent starting place for you to think through your survey, especially if you are evaluating a museum or exhibition.

- Caroline Jarrett, Surveys That Work: A Practical Guide for Designing and Running Better Surveys, Rosenfeld Media, 2021.

This book is a clear and accessible guide to help with all your survey needs. Working logically, it takes you through your survey goals, sampling, how to write a good question and build that into a questionnaire, and what to do with the data, concluding with a helpful list of further reading. Highly recommended.

- Felicity A. McDowall, ‘Lost in Temporal Translation: A Visual and Visitor-Based Evaluation of Prehistory Displays’, Antiquity, 97.393, 2023, pp. 707-25.

A thorough investigation into visitor evaluation of museum displays, focussing on prehistory in UK museums. McDowall uses visitor observation alongside questionnaires delivered both before and after visitors look at the displays. She presents her analysis of the data using word clouds, graphs, and infographics.

- British Museum Visitor Research and Evaluation website: https://www.britishmuseum.org/research/projects/visitor-research-and-eva...

A large UK museum that dedicates a lot of resource to evaluating audiences, this webpage contains a summary of the British Museum’s aims for evaluation. The ‘Outputs’ section includes some example exhibition evaluation reports (although the most recent is from 2019). These rely heavily on surveys, which are usually delivered by an evaluator, through a kiosk in the exhibition or online. Some are based on very small sample sizes (for example the report for I Am Ashburbanipal: king of the world, king of Assyria is based on 197 surveys, from over 139 000 visits). Note the use of graphs, images, and tables to present the information.

- Practical Evaluation Resources: https://www.practicalevaluation.tips

This website was the outcome of collaboration between university researchers Kate Noble and Eric Jensen, with an emphasis on evaluation with children and young people. The resources section includes helpful downloads, including templates for GDPR consent, questionnaires for teachers, young people and families and a suggested survey for the evaluation of longer-term projects. The logic model template is useful if you are at an early stage of your project as it can help you think through the evaluation process.

- Sarah Jenkins, Dr Jenny Lisk and Aaron Broadley, Natural Sciences Collections Association Subject Specialist Network Report: The Popularity of Museum Galleries, Jenesys Associates Ltd, 2013.

A report for a Subject Specialist Network, persuasively using data collected in 10 museums with mixed subject matter, to measure the popularity of different types of galleries and the reasons visitors chose to visit them. Over 500 visitors were surveyed and over 250 interviewed, using questions that were tailored to each venue. Results are presented in simple graphs, tables, and utilising direct quotes in visitors’ own words. Both the survey itself (particularly the demographic questions), and the presentation methods are showing their age, but it does a reasonable job at unpicking the data to match up the preferences of different demographic groups.

- Linda J Thomson and Helen J Chatterjee, UCL Museum Wellbeing Measures Toolkit, UCL, 2013.

A practical toolkit for evaluating wellbeing activities, tested in museums, but applicable more broadly. The resource was developed following an AHRC-funded series of workshops across the UK and includes two questionnaires (a long and a short version) and a set of ‘Wellbeing Measures Umbrellas’. These are brightly coloured figures, with either a positive or negative word associated with a five-point scale. An analysis section takes the reader through creating some basic measures for reporting.

Download the Surveys and asking questions resources

Next page: Visitor Observation

Author: Dr Sarah-Jane Harknett.

Updated: December 2025.